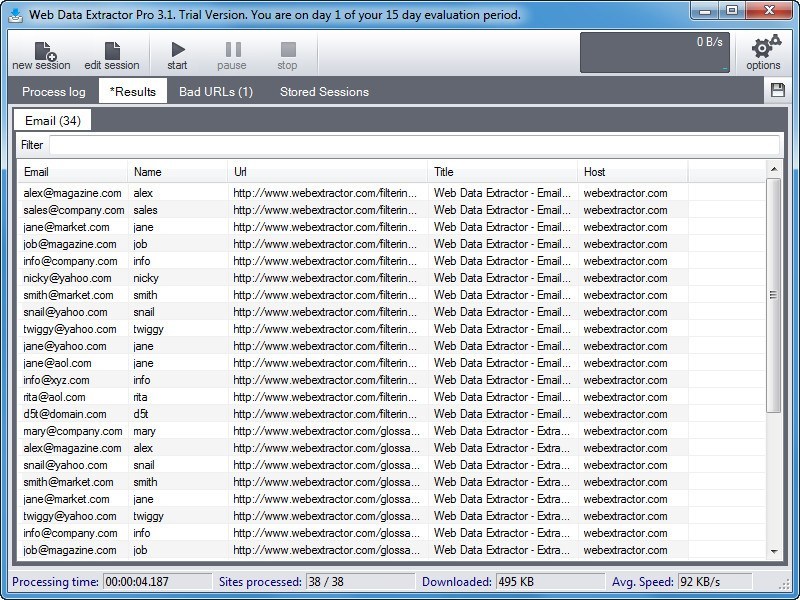

There are many non-financial uses for data extraction, such as scraping news websites to monitor the quality and accuracy of stories or to monitor trends in reporting. It also gives researchers a powerful tool to study the performance of financial markets and individual companies, guide investment decisions and shape new products. Extracting and aggregating data from public-domain websites and other digital sources - also known as web data scraping - can give you a significant business edge over your competitors.ĭata extracting generates insights that can help companies analyze the performance of a particular product in the marketplace, track customer sentiments expressed in online reviews, monitor the health of your brand, generate leads, or compare price information across different marketplaces. There’s a vast amount of information out there on the Internet. This extracted data can then be used for other purposes, either displayed to humans via some kind of user interface or processed by another program. This is most likely a spreadsheet or some kind of machine-readable data exchange format such as JSON or XML.

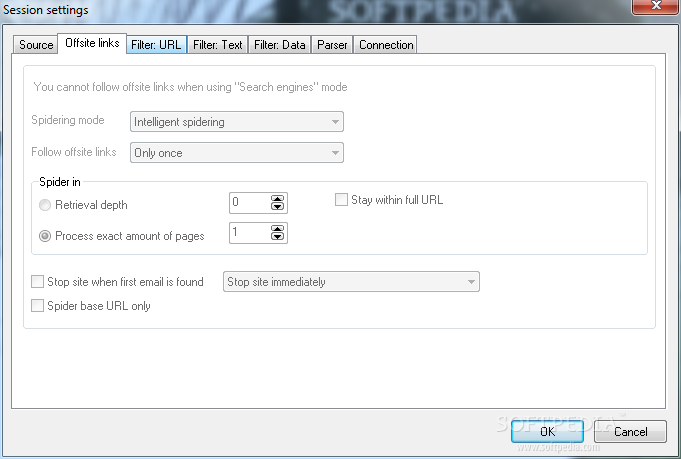

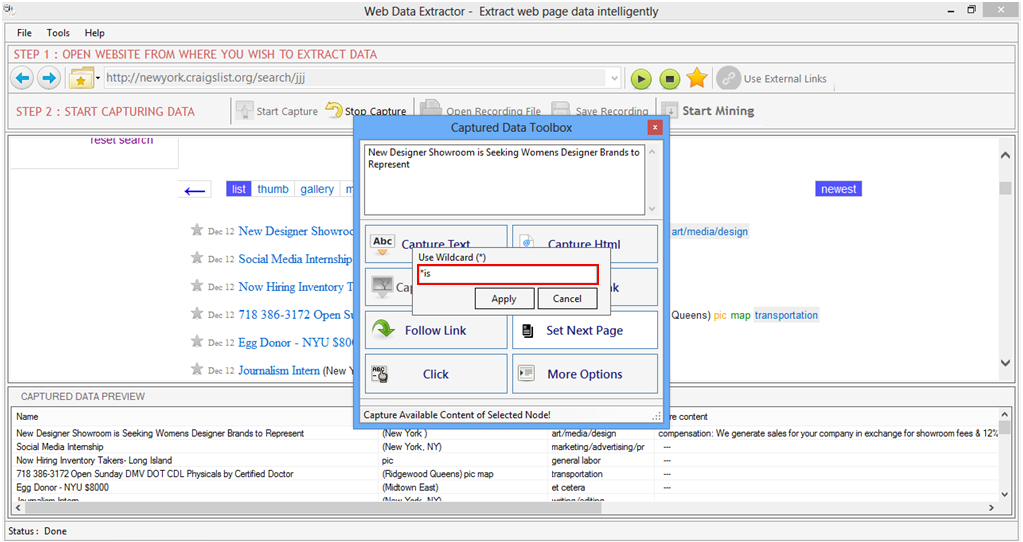

Hence, data extracting is typically performed by some kind of data extractor - a software application that automatically fetches and extracts data from a web page (or a set of pages) and delivers this information in a neatly formatted structure. This is likely to be time-consuming and error-prone for all but the smallest projects. This can be performed manually by a person cutting and pasting content from individual web pages. This extracted information is typically stored and structured to allow further processing and analysis.Įxtracting data from Internet websites - or a single web page - is often referred to as web scraping.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed